Why Does Thinking Feel So Hard?

Effort Feels Costly When the Brain Can't Detect Progress

In the 1930s, R. H. Waters took the well-established idea that organisms conserve effort and applied it directly to learning. He claimed that students instinctively chose the route that demands the least mental work. But strangely, they did this even when they knew a more effortful pathway would lead to deeper understanding. At a time when psychology was dominated by behaviourism and education was steeped in what would later become the moral language of grit and discipline, this was an uncomfortable proposition. Effort was seen as a virtue, not a variable. Waters’ suggestion that learners actively minimise mental effort, not out of laziness, but as a basic cognitive strategy, was both unconventional and ahead of its time.

Studies from the period, including Waters’ own 1937 paper, showed that animals navigating mazes often avoided the shortest physical route and instead chose the path that was simplest, most predictable, and least cognitively taxing. As Zhu and colleagues note, these animals displayed a preference for routes that reduced uncertainty, decision points, and the risk of error, even if doing so required travelling farther.

This refinement matters: it suggests that “least effort” is not merely about saving physical energy but about minimising mental complexity. In this light, Waters’ claim looks less like an oddity of the behaviourist era and more like an early glimpse of a principle that modern cognitive science now formalises with concepts such as cognitive load, effortful thinking, and control costs.

The universal dislike of effort

Why does thinking feel so hard? And when I talk about “thinking” in this context, I don’t mean daydreaming, I mean attending to something of complexity or controlled cognition: the deliberate, slow, effortful kind of mental work that requires you to hold information in mind, update it, suppress distractions, make comparisons, and keep track of what matters. For whatever reason, our minds simply don’t want to do this. In fact they would rather do anything else. It seems to me a strange evolutionary adaptation given the obvious benefits.

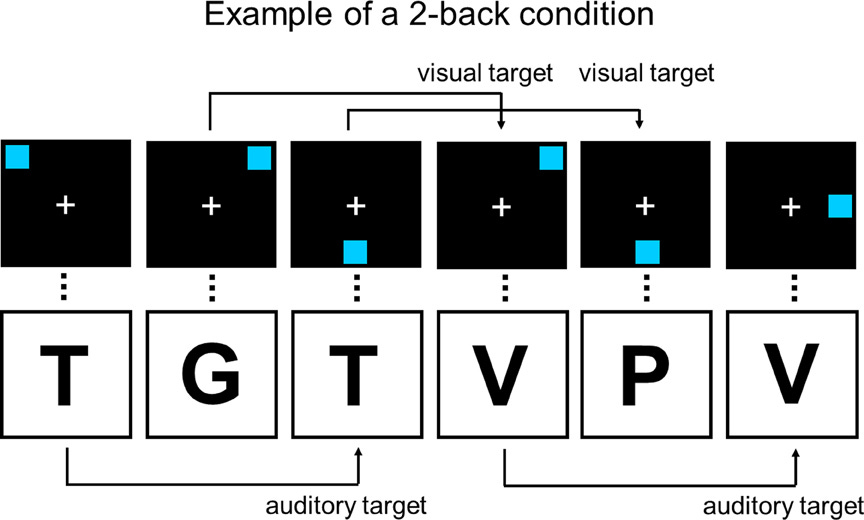

Nearly a century after Waters claim, modern neuroscience is still grappling with this idea of effort minimisation, now understood not as a quirk of behaviour but as a fundamental organising principle of the brain. When psychologists study effort today, they often turn to the N-back task, a deceptively simple working memory test where letters appear one by one on a screen and you must decide whether the current item matches the one shown N steps earlier. A 1-back requires you to compare with the previous item; a 3-back forces you to juggle and update three items continuously. The cognitive demand rises sharply with each increase in N. You can try it yourself here.

Give someone a choice between a 1-back and a 3-back and they will almost always choose the easier option. Raise the stakes and offer them money and they will still avoid the harder version. Offer them mild physical pain instead of a very difficult N-back and many will take the pain. This is not irrational. It is consistent, predictable and ancient.

But here’s the puzzle: why does effort feel costly? Lots of models merely assume it is costly but few explain the origin of the cost.

Three theories of cognitive effort costs

It turns out this is not a character flaw, nor a problem of motivation. A fascinating new paper by Otto, Westbrook, and Daunizeau offers the clearest explanation yet for why mental effort feels costly and why our brains push back against difficult tasks even when we know they are beneficial for us.

The authors examine three distinct theoretical accounts, each drawing from different scientific traditions and offering explanations at different levels of analysis. These frameworks echo long-standing debates in cognitive science: Kahneman’s foundational work on attention and effort, the controlled versus automatic processing distinction, and Marr’s hierarchy of computational, algorithmic, and implementation-level explanations. Each theory illuminates aspects of cognitive effort that the others overlook, and each carries distinct implications for how we understand the subjective experience of demanding mental work.

Processing bottlenecks and opportunity costs

The first account locates effort costs in architectural constraints on cognitive control. Controlled thinking relies on a limited, shared resource. When you allocate this resource to one task, you cannot use it for anything else. This creates opportunity costs: the value of whatever else you might have been doing with those same processing resources.

This perspective aligns with Kurzban’s opportunity cost model and contemporary accounts of cognitive fatigue, which suggest the brain continuously estimates what else it might accomplish with its limited attentional capacity. As task demands increase, moving from a 1-back to a 3-back working memory task, for instance, more of this bottlenecked resource becomes occupied. The opportunity cost intensifies, and so does the subjective experience of effort.

The account explains several familiar phenomena. Why is multitasking performance so poor? Why does sustained maintenance of information feel especially effortful? Why do working memory and cognitive control exhibit such severe capacity limits? The bottleneck view provides some answers. It also connects to theories of mental bandwidth, suggesting that scarcity itself magnifies the subjective cost of effort.

Yet the account faces a challenge. Opportunity cost alone struggles to explain fine-grained differences in subjective effort within similar tasks. If the value of alternative activities remains constant, why should a 2-back feel substantially more effortful than a 1-back? The answer may lie in the density of bottlenecking per unit time, with higher load levels imposing more intensive resource demands in each trial. But this requires additional assumptions about how opportunity costs accumulate.

Information theory and the cost of belief updating

The second framework, rooted in computational neuroscience, interprets effort costs as the informational cost of updating internal representations. Each time you transition from uncertainty about the next stimulus to recognition that the stimulus is a particular letter, you incur an energetic cost. In other words, every act of resolving uncertainty carries a metabolic price. The larger the gap between what you expected and what you learned, the higher the price.

This logic derives from information theory. The cost of an inference can be quantified by something called the Kullback-Leibler divergence between prior and posterior belief distributions. In simpler terms: larger updates, those that reduce greater uncertainty, demand more energy. In simple terms, effort is what it feels like to repeatedly and precisely change your mind.

The account connects naturally to predictive processing frameworks, which characterise the brain as a prediction machine constantly working to minimise surprise. It also ties to principles of energy-efficient neural coding and findings about the metabolic costs of learning and memory. Just as long-term memory formation in animals imposes measurable metabolic demands, so too does the ongoing work of updating beliefs during complex cognitive tasks.

In the N-back paradigm, informational costs scale with load. Higher N levels require tracking more items, resolving greater uncertainty, and performing more updates on each trial. The divergence between pre-stimulus and post-stimulus brain states grows with N, generating correspondingly larger subjective effort costs.

This framework offers an elegant unification: it grounds subjective effort in deep computational principles whilst connecting to known biological constraints on neural metabolism. However, it remains somewhat abstract. It specifies the formal structure of effort costs without fully accounting for how the biological implementation of belief updating might itself contribute to the experience of effort.

Network control theory and the energy of reaching unstable states

The third account offers the most directly biological explanation. It treats the brain as a dynamical system whose state, the pattern of activity across regions at any given moment, evolves through time. Some states are easy to reach because they align with the brain’s intrinsic dynamics. These correspond to automatic processes. Others are difficult to reach because they are unstable or transient, requiring substantial control energy to attain and maintain. These correspond to controlled processes.

Network control theory, borrowed from engineering, provides formal tools for predicting the energy required to steer a system toward target states given its structural architecture. Different brain regions exhibit different degrees of controllability. The prefrontal cortex, owing to its distinctive connectivity profile, exhibits high modal controllability. It is ideally positioned to move the brain into hard-to-reach states, albeit at considerable energetic cost.

In this perspective, controlled processes that rely on prefrontal cortex correspond to target brain states that are inherently expensive to attain and sustain. Working memory representations, which tend to lose stability over time, exemplify such states. Successfully completing a 2-back task requires sustaining more unstable states than a 1-back task, thus demanding greater control energy. The subjective experience of effort maps directly onto this energy expenditure.

This account makes contact with early work on automaticity. Once a skill becomes automatised, it effectively moves into an easier-to-reach region of the brain’s state space. It no longer requires active control energy to sustain. Before that, the control system must continuously expend energy fighting against the system’s natural dynamics. The familiar observation that “thinking deeply burns fuel” receives, in this framework, a precise computational interpretation grounded in the physics of neural networks.

What distinguishes this account is its specificity. It does not merely assert that effort is costly. It explains why certain cognitive operations demand more energy than others as a consequence of the brain’s structural organisation. The topology of the brain’s connectivity constrains which states can be reached efficiently. Subjective effort reflects the energetic price of traversing this landscape.

Each theory explains a part of why thinking is hard. Together they converge on one core principle:

Cognitive effort is costly because controlled thinking requires shifting, updating, and stabilising brain states that are energy-intensive, opportunity-expensive, and computationally demanding.

This echoes the “effort paradox” literature: effort is both something we avoid and something we value, a tension seen in both cognitive science and educational psychology. It also helps explain why students so often prefer procedural fluency to conceptual struggle: one requires pushing uphill, the other rolls downhill.

Understanding this helps us design instruction that works with the architecture of the brain, not against it.

Effort Feels Hard When the Brain Can’t See What It’s Gaining

The most striking insight from this paper for me is the idea that subjective effort may relate less to the amount of energy expended than to the perceived inefficiency of that expenditure for achieving cognitive goals. In other words, effort feels costly not simply because the brain is working hard, but because the brain cannot detect whether that work is producing results. Even though we know it’s worth the effort, we paradoxically decide it’s actually not worth the effort.

This helps explain a puzzle that has long troubled effort research: why do some demanding tasks feel effortless whilst others feel punishingly difficult? The answer may lie in what the authors call the ‘controllosphere’, the region of the brain’s state space populated by hard-to-reach states. When a task requires sustained movement through this region, the brain’s self-monitoring systems struggle to detect progress. Metacognitive sensitivity decreases as task difficulty increases, shrinking the perceived efficiency of energy investments. Thus, allocating processing resources has less perceivable effect in a 3-back task than in a 1-back task, rendering the 3-back more subjectively effortful even if the absolute energy difference is modest.

Flow states, by contrast, feel effortless not because they are easy, but because progress is perceptible. As Csikszentmihalyi observed, flow emerges when challenge and skill are matched and improvement is detectable. The network control account offers a precise explanation: flow states involve movement through easy-to-reach brain states where the self-monitoring system can track progress, thereby compensating for the energy expenditure and enhancing perceived cognitive efficiency. The brain experiences clear improvement per unit of energy invested.

All this has a lot of implications for learning and instructional design. A poorly designed task makes effort feel wasted because students expend energy without detectable progress; they are moving through the controllosphere with no visible landmarks. A well-scaffolded task, by contrast, makes effort feel rewarding because each increment of work produces perceptible advancement. This is not merely about making tasks easier, it is about structuring them so that progress remains visible to the learner. When students can monitor their own improvement, when they can detect that their cognitive investments are paying off, the subjective cost of effort diminishes even as the objective demands remain substantial.

Cognitive overload is about architecture, not attitude

All of this reframes the perception of laziness. Teachers often interpret student struggle as a motivational problem: students are not trying hard enough, not sufficiently engaged, lacking in resilience but recent evidence suggests the real issue is architectural. However, this research suggests the subjective cost of cognitive effort can stem from unstable representations that must be continuously maintained, large updating demands as beliefs are repeatedly revised, high levels of interference between competing processes, and the saturation of bottlenecked control resources. When these factors align, tasks feel punishingly difficult regardless of motivation.

Cognitive Load Theory is helpful here because it highlights how this can be a result of poorly designed instruction. Intrinsic load rises when task elements interact heavily, demanding that students hold multiple interdependent pieces of information in mind simultaneously. Extraneous load in the form of cognitive work that does not directly support learning, undermines the sense of progress and compounds the subjective cost. Reduce these sources of load and tasks become less aversive almost immediately, not because students have become more motivated, but because the architectural demands have been eased.

The good news is that if effort costs are architectural, they are also tractable: we can do something about it. We cannot change students’ cognitive architecture, but we can change how we make demands upon it. Well-designed curricula and instruction reduces interference through careful sequencing, stabilises representations through worked examples and scaffolding, manages updating demands by introducing new information in digestible increments, and avoids saturating control resources by eliminating extraneous processing.

This shifts the locus of responsibility from student character to instructional quality. When students find a task unbearably effortful, the first question should not be about their motivation but about the design of the task itself. Have we asked them to hold too many elements in mind at once? Have we introduced unnecessary sources of interference? Have we scaffolded appropriately to reduce the instability of the representations they must maintain? These are questions we can answer, and problems we can solve, through better instructional design.

AI Tutors Need More Than Information Theory to Calibrate Difficulty

After reviewing the paper, I contacted the lead author with a follow-up question for educators: if larger belief updates feel more effortful, could we use this principle to help teachers (or even adaptive tutoring systems) know when they’re pushing students too hard? In other words, might we measure how much a student’s understanding needs to change on each instructional step, and use that to calibrate difficulty in real time? His response was very interesting:

One issue that we probably were circumspect about in the paper was that the KL definition of effort is a bit ill constrained in terms of making specific commitments to effort costs in concrete tasks (e.g. the N back). But still it might be a good starting point or heuristic for an adaptive AI system. I can’t imagine some idea like this hasn’t been applied before as info-theoretic techniques have very natural application in AI.

Whilst the information-theoretic framework provides an elegant way of thinking about effort, it’s not yet precise enough to make specific predictions about how effortful a particular learning task will feel. The theory tells us that bigger jumps in understanding should feel harder, but it doesn’t yet tell us exactly how much harder, or how to measure those jumps in real classroom contexts.

Still, this framework might indeed work as a useful heuristic, a rough guide rather than a precise formula. An AI adaptive model that tries to keep students making steady progress without overwhelming them with massive conceptual leaps might well approximate what skilled teachers do instinctively when they sense whether students are ready for the next idea. We are not there yet however.

The limitation is worth noting. These theories illuminate the general structure of cognitive effort, but translating that structure into actionable instructional design remains challenging. We’re still some distance from a cognitive effort meter that could tell us, in real time, whether we’ve calibrated a lesson optimally. What we have instead are frameworks that sharpen our thinking about why some instructional sequences feel more demanding than others, even if they cannot yet prescribe exactly what those sequences should be.

For any work enquiries contact me here.

Brilliant post! Couple of things strike me. Firstly I'd wish I'd read something like this whilst doing my Masters in Ed tech. Too often there is little crossover between computing & cognitive theory, hence the demand for more research in those areas. Secondly, that it's a really helpful account of how learning happens & would be hugely beneficial on a teacher training course.

mplementation was harder than expected