How Much Cognitive Damage Does A Phone Notification Actually Do?

The Science of Fractured Attention and Why Screen Time Is the Wrong Debate

A new study reveals that a single social media notification can disrupt cognitive processing for up to seven seconds which doesn’t sound much by itself but the cumulative effects can be disastrous for the kind of focus that learning anything requires. Also it is the frequency of interruptions, not the total time spent on our phones, that predicts our vulnerability to distraction. What does this mean for how we think, how we read, and our ability to pay attention?

There is a passage in Simone Weil’s Gravity and Grace where she describes attention as the “rarest and purest form of generosity”. It is, she argues, a faculty that requires the deliberate suspension of thought; a waiting, an emptying of the self so that truth might enter. What Weil understood, long before the invention of the smartphone, was that attention is not merely a cognitive resource to be allocated. It is a moral one.

I was a classroom teacher for 18 years and I thought of this often when I observed my students. Not because they were inattentive in any traditional sense; most of them were earnest and willing. Rather, because their attention had been colonised by a system designed to fracture it. The ping of a notification, the silent vibration in a pocket, the ambient awareness that something, somewhere, might require a response: these are not incidental features of modern life. They are now its architecture.

A new study published in Computers in Human Behavior by Fournier and colleagues offers one of the most rigorous experimental accounts to date of what this architecture actually does to the human mind. The findings are alarming not for their novelty; (most of us already know that notifications disrupt our thinking). They are alarming for their precision, and for what they reveal about the mechanisms at work beneath our awareness.

The Seven Second Tax

The researchers designed an “ecologically valid paradigm” (basically a test done in a real classroom) in which participants completed a Stroop task, the classic measure of cognitive interference, while receiving smartphone style notifications modelled on the iPhone interface. What distinguishes this study from earlier work is that participants in the key condition were led to believe, via an elaborate cover story, that the notifications were genuinely their own. This mattered enormously, because the disruption was not merely perceptual but personal.

The central finding: a single notification triggered a transient slowdown in cognitive processing lasting approximately seven seconds. Seven seconds may sound trivial, but in the context of sustained intellectual work, these interruptions are cumulative. If the average participant in the study received over 150 notifications per day (and they did; a figure that is alarming by itself), the aggregate cost is not a few scattered seconds but a fundamentally altered cognitive rhythm. The mind never fully settles. It hovers in a state of anticipatory vigilance, perpetually primed for the next interruption.

What makes this finding particularly instructive is the graduated nature of the disruption. The researchers employed three experimental groups to disentangle the mechanisms at play. Participants who saw blurred, unrecognisable notifications showed only marginal disruption; the effect was barely significant. Those who saw realistic notifications from their own most used apps, but without believing they were real, showed moderate disruption. And those who believed the notifications were genuinely theirs showed the largest effects, with a Cohen’s d of 0.62: a substantial effect by any reasonable standard.

This progressive escalation reveals something important. The disruption is not simply a matter of visual distraction; ie a flash of colour at the periphery of vision. It is driven by three overlapping mechanisms: perceptual salience (the notification catches the eye), conditioned association (repeated pairings between notifications and meaningful outcomes have trained the brain to attend), and, most powerfully, relevance appraisal (the notification might concern me). The last of these is the crucial amplifier. Put simply, we are not merely distracted by notifications. We are recruited by them.

Perhaps the most consequential finding is what actually predicts the magnitude of disruption. The researchers collected objective smartphone data from participants’ devices over the preceding three weeks: daily notification volume, frequency of phone checks, and total time spent on the device.

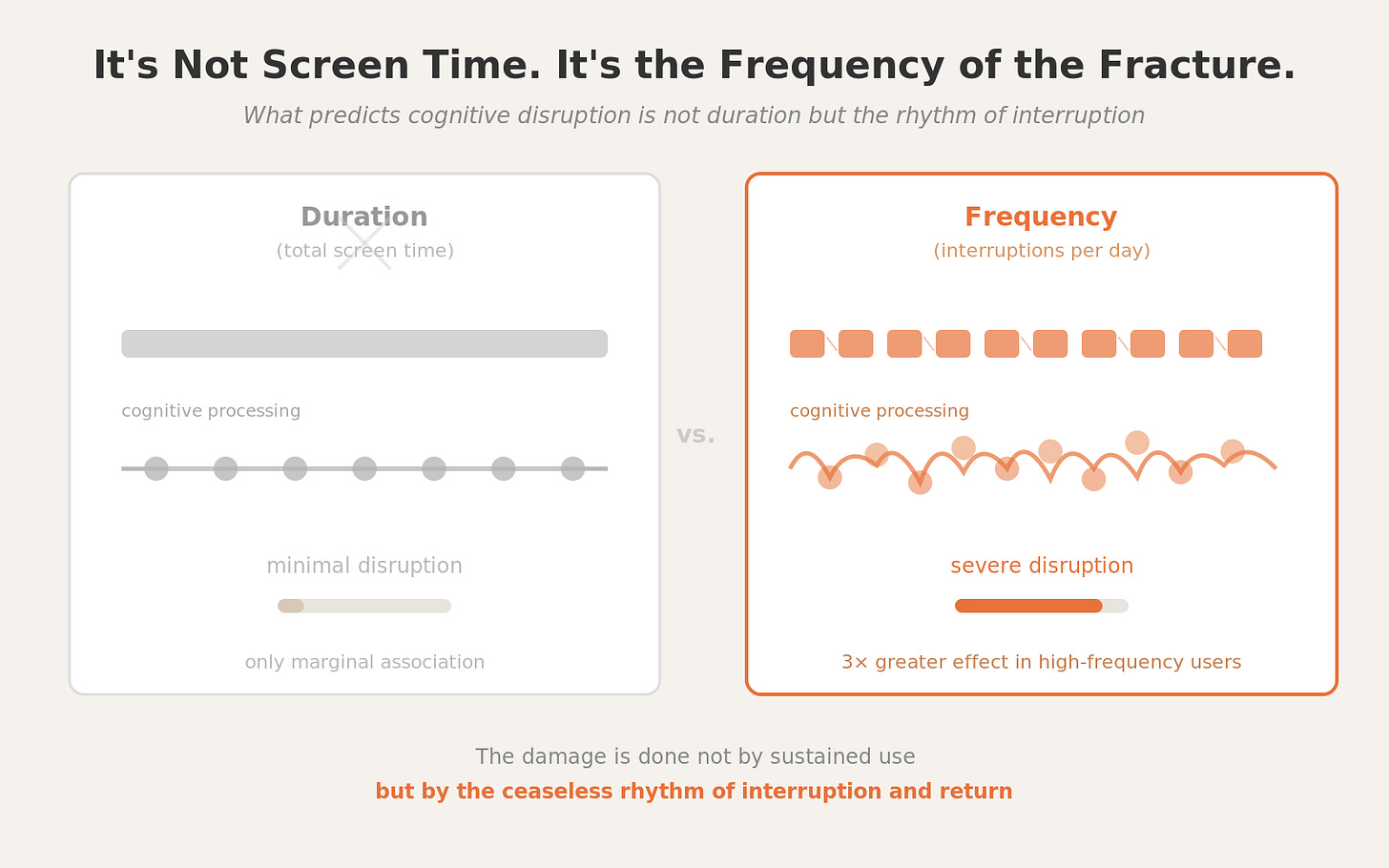

The result challenges the dominant framing of the screen time debate. Total time spent on the smartphone was only marginally associated with cognitive disruption. What predicted vulnerability was not duration but frequency: how often notifications arrived, and how often the participant reached for their device. A composite measure of “smartphone interaction,” averaging notification volume and checking frequency, proved the strongest predictor; those with the highest interaction frequency showed disruption effects more than three times larger than those with the lowest.

This distinction matters profoundly, and not only for researchers. The public conversation about digital wellbeing has been almost entirely organised around the vague term “screen time”: how many hours per day, how many minutes per session. Parental anxiety fixates on duration, but this study suggests that the relevant variable is not how long we stare at the screen, but how frequently we are pulled toward it. The damage is done not by sustained use but by the ceaseless rhythm of interruption and return.

The mechanism, as the authors suggest, is likely one of incentive sensitisation. Repeated exposure to rewarding cues; the dopaminergic kick of a like, a message, a notification; gradually sensitises the brain’s reward circuitry, endowing these stimuli with increasing attentional salience. Over time, notifications acquire the attention capturing properties typically reserved for stimuli far more consequential: threats, faces, survival relevant cues. A notification from Instagram, in the brain’s reckoning, begins to compete with a rustling in the undergrowth.

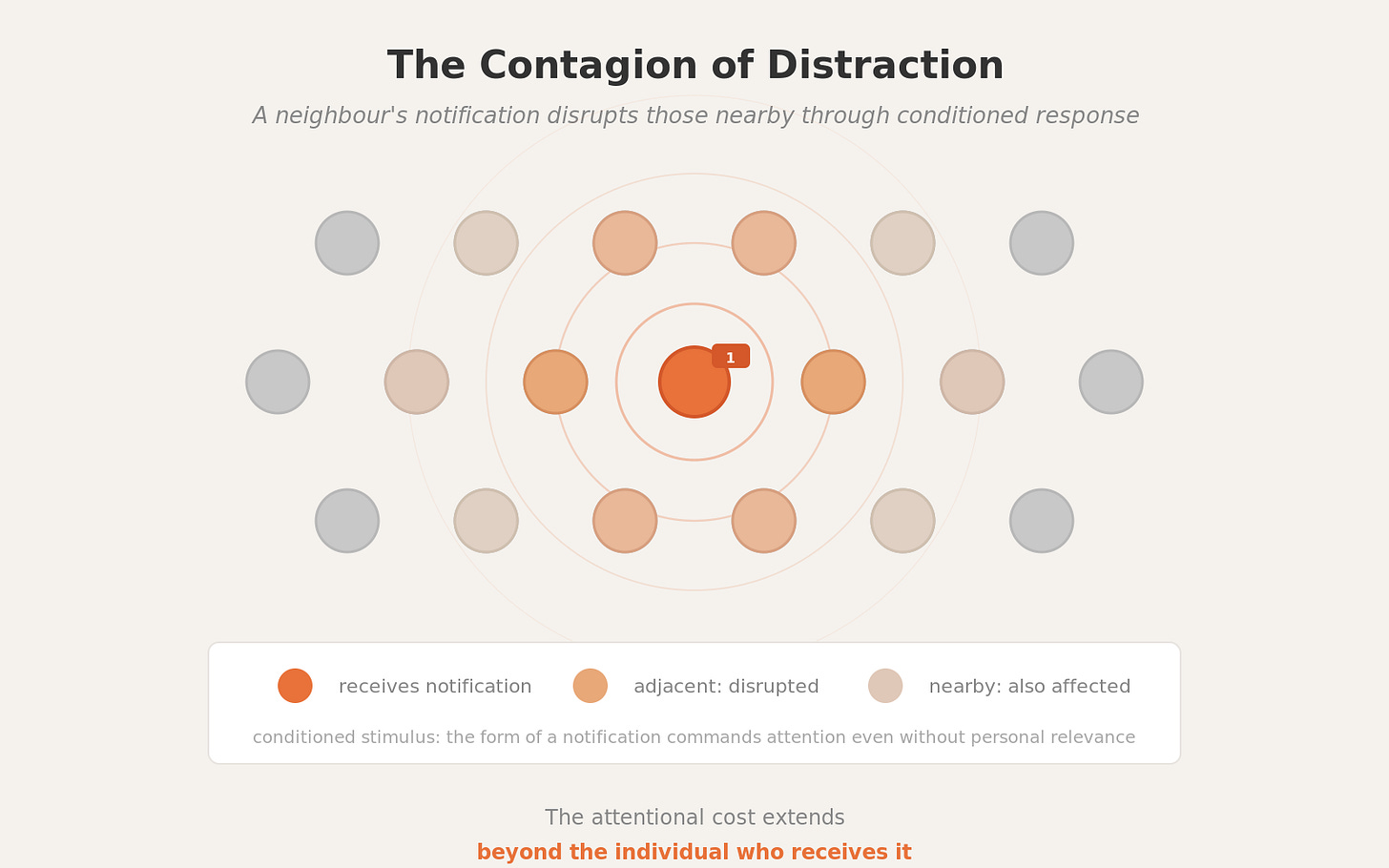

One of the study’s more unsettling implications concerns what might be called the contagion of distraction. Even in the dummy notification condition, where participants knew the notifications were not their own, the disruption was significant. The mere appearance of a recognisable social media notification; its icon, its format, its characteristic animation; was enough to capture attention and slow processing.

This means that the attentional cost of notifications extends beyond the individual who receives them. In a lecture hall, a meeting room, or a classroom, a notification appearing on a neighbour’s screen can disrupt those nearby, not because it concerns them, but because their brains have been conditioned to respond to its form. The notification has become, in the language of behaviourism, a conditioned stimulus: a cue that reliably predicts the possibility of reward, and that therefore commands attention even when the reward is not forthcoming.

The pupillometric data corroborate these behavioural findings. Notifications rated as more emotionally valenced, whether pleasant or unpleasant, produced larger changes in pupil dilation, a physiological marker of arousal. And participants who habitually received more notifications showed amplified pupillary responses, suggesting that the sensitisation effect operates not only at the level of conscious appraisal but at the level of autonomic arousal. In other words, the body responds before the mind has decided whether to care.

Every Notification Is A Small Vote For Shallowness

For those of us who work in education, this research carries implications that are both practical and philosophical. Practically, it reinforces what many teachers already suspect: that the mere presence of a smartphone in a classroom, (even one set to silent mode), represents a cognitive liability. Pop up notifications, whether seen or merely anticipated, impose a tax on the very attentional resources that learning requires. And this tax falls disproportionately on those students whose notification environments are most dense and most rewarding; which is to say, on those who are most deeply enmeshed in the platforms designed to exploit their attention, usually social media.

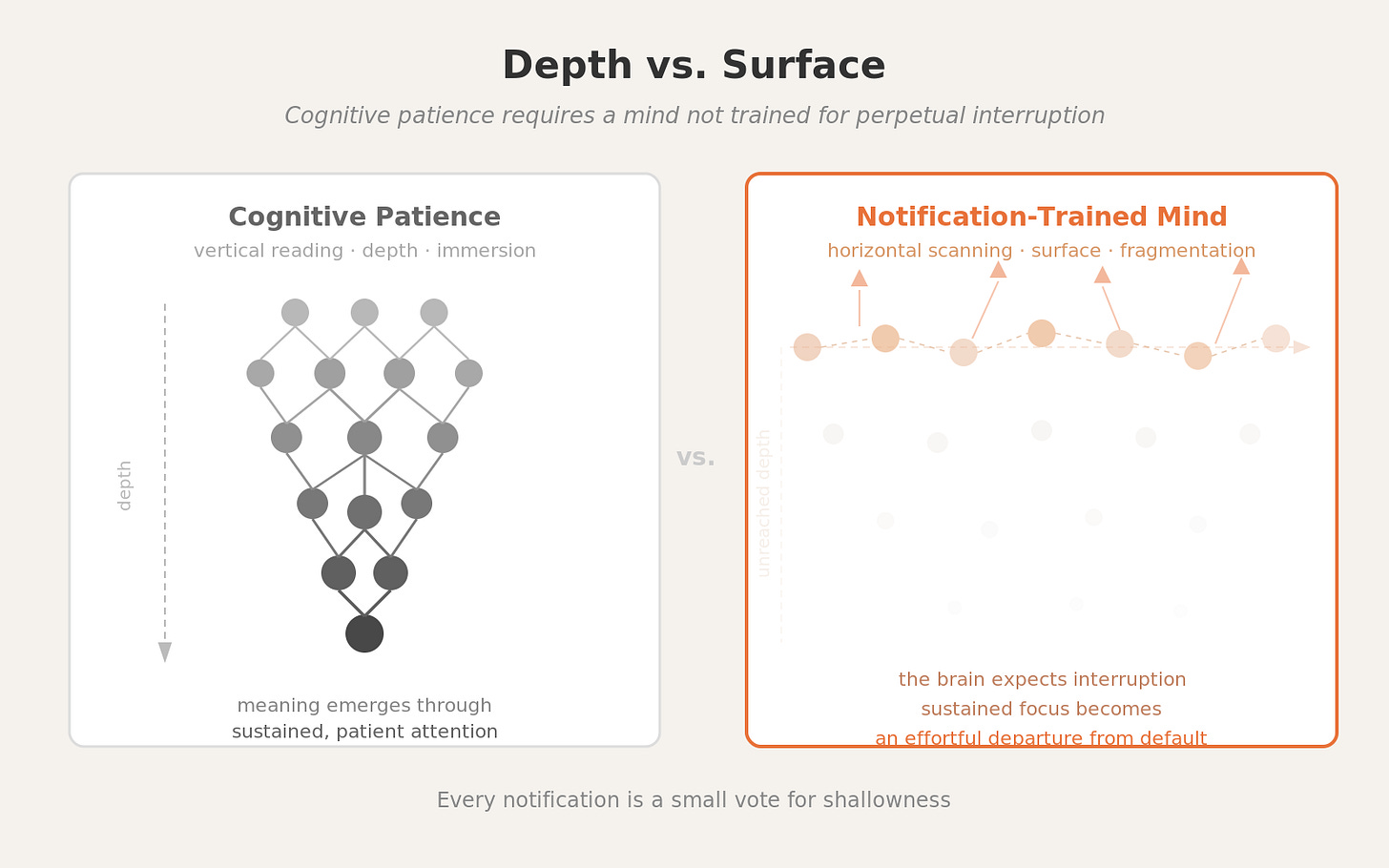

Philosophically, the study illuminates something about the nature of the reading crisis I have written about before. Maryanne Wolf’s concept of “cognitive patience,” the willingness to linger with difficulty, to sustain attention long enough for meaning to emerge, presupposes a mind that has not been trained in the opposite direction. But this is precisely what the notification environment does: it trains the brain to expect interruption, to anticipate reward at ever shorter intervals, to treat sustained focus not as the default mode of cognition but as an effortful departure from it.

The Fournier study quantifies what Wolf, Birkerts, and others have described in more literary terms. The shift from vertical to horizontal reading, from depth to surface, from immersion to scanning, is not merely a cultural preference. It has a neurological substrate, shaped and reinforced by the thousands of micro interruptions that punctuate each day. Every notification is a small vote for shallowness.

Attention as a Thing Worth Defending

The authors are careful to note that their findings encourage a reorientation of digital wellbeing strategies. Rather than focusing exclusively on reducing screen time, interventions might more usefully target the frequency and timing of interruptions: protected periods without notifications, context sensitive delivery systems, or simply the habit of placing the phone in another room during focused work.

This is sensible advice, but it does not go far enough. The deeper question is whether we are willing to treat attention as something worth defending, not just optimising. The platforms that generate these notifications are not neutral conduits of information. They are, as James Williams has argued, systems of “adversarial design,” engineered to work against the user’s higher order goals, to subvert autonomy in the service of engagement metrics. To respond to this with a few adjustments to notification settings is rather like treating a structural flood with better buckets.

What the Fournier study ultimately reveals is not simply that notifications are distracting. It is that our relationship with our devices has been shaped, through thousands of daily conditioning trials, into something resembling a compulsion. The notification does not merely interrupt thought. It has been engineered to make thought interruptible.

For educators, parents, and anyone who cares about the life of the mind, this should be a matter of some urgency. Not because the smartphone is uniquely evil, but because the cognitive habits it cultivates are antithetical to the kind of sustained, effortful, patient thinking that serious learning demands. As Weil understood, attention is a form of generosity. What the notification economy asks of us is something closer to its opposite: a perpetual readiness to be claimed.

I’m excited to be coming to Australia this March to work for some talks and workshops on applying the science of learning.

Ironically, as I was writing a Restack of this post, a popup randomly appeared on my computer asking me if I wanted to enable Siri. I spent the next ten minutes trying figure out to prevent such popups from occurring in the future, to no avail. I then had to go back and try to remember what I was planning to write. So unfortunately, frequent interruptions are baked into the system of how we now work. This affect adults and children alike.

Anecdotally, I've found that task switching is a kind of "notification fatigue" on par with typical alert-style notifications. I've practically eliminated notifications, but still felt a weird kind of stress every day, that resulted in a kind of dull headache in the early evening.

I found a feature in iOS this week: Guided Access. When you enable it, you add friction for task switching. Like, it takes a triple click and unlock code to use a new app.

It's been great, because it gives the phone "a job". During the day, I now dedicate my phone to playing music.

Between this, and disabling notifications, it's completely changed my habits. I no longer just bounce around looking for texts, emails, reddit, etc, during the day. And that... completely removes a ton of sources of focus loss. It's eliminated that daily headache.

It's weird, but giving each screen ONE job at a time has been profound. I've started to do similar things with my computer. Each screen has ONE job. And if I can't dedicate a job for a screen, I turn it off and put it away.